This Is Not the Dunning–Kruger Effect

But an artifact of the war on intuition.

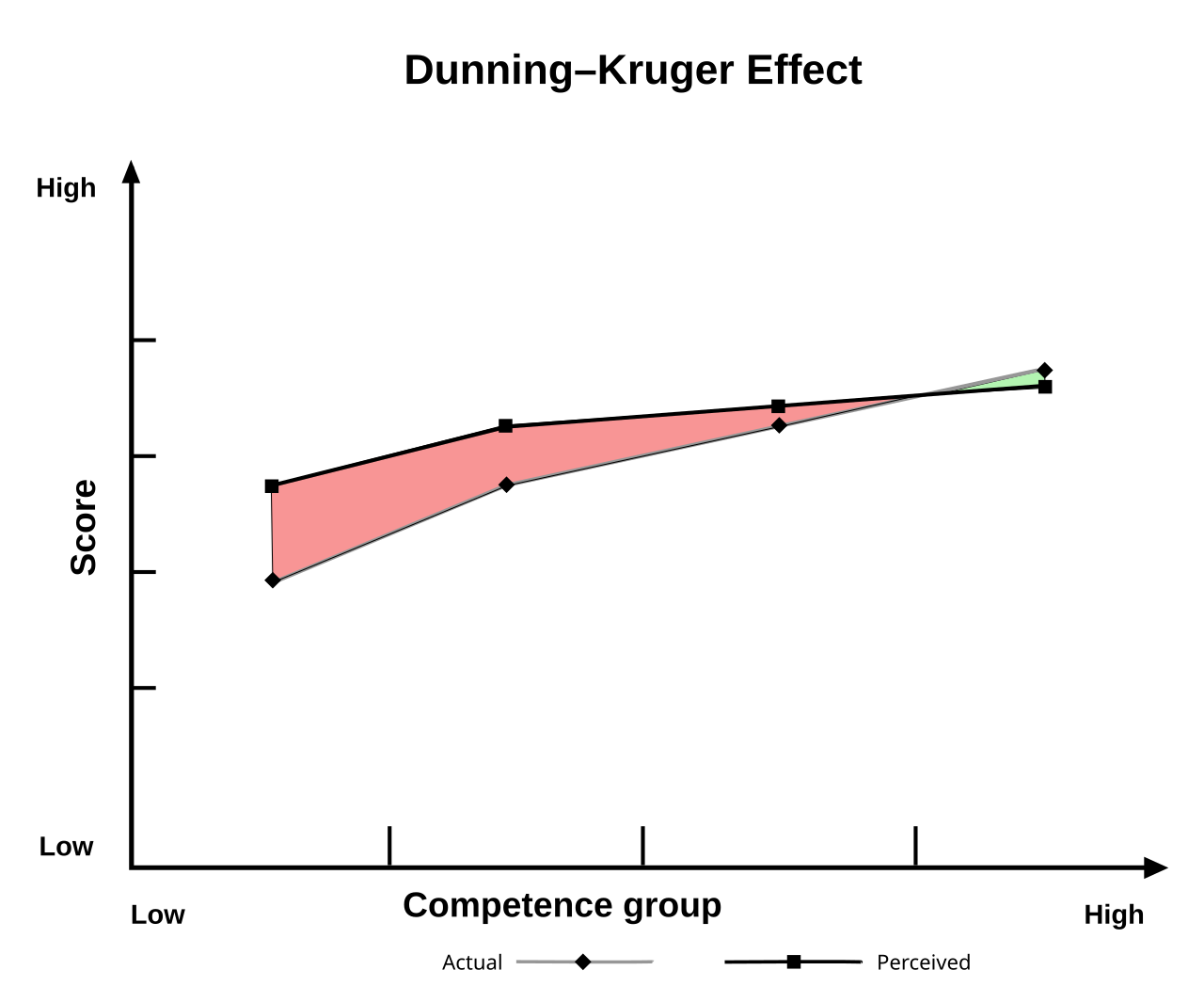

This is:

The first image is meant to illustrate the arrogance of beginners (mount stupid), the despair of intermediates (valley of despair) and finally the slow rise of actual competence along a long tail.

Feels right, doesn’t it? It’s catchy, hence the famous and yet mostly made up chart. Really, it mischaracterizes the actual effect so badly that you wonder if there’s some willful ignorance there.

Now, you can understand where the gist came from: Low performers are indeed overconfident in their skills and high performers are a little underconfident. Low performers do rank themselves consistently lower than high performers, just not quite low enough. But the actual disparity is not nearly as dramatic as the meme’d data. There is also no mount stupid nor a valley of despair unless you get incredibly creative with your axes.

The overconfidence could be an arrogant blindness to reality, sure. Or it could be just a measurement artifact: maybe people rank themselves somewhat near the middle for almost anything. You would expect low performers to reasonably err toward the low middle and high performers toward the high middle, both extremes being off. This could be explained with modesty, social awareness, reasonable unwillingness to be too down on yourself, the fact that a middle guess is more likely than a marginal one, number-two pencil bias, or some mysterious fifth thing that will be reported in-depth on This American Life in ten years.

To jump to wanton delusion, in fact, feels to me like a data-Rorschach onto which our culture has projected its distrust of intuition. It suggests, for one, only “experts” may have opinions on any given subject. It also suggests that you can “grind” your way to expertise with enough “education.” Neither are strictly true, in my experience. The implications are comforting, though, if you see yourself as a under-appreciated intermediate who will one day be a master.

Actually, if I think about it, it goes completely against experience. I don’t know the first thing about ice skating, and yet, if you show me people ice skating, within a minute it will become absolutely unambiguously clear who is the best at it. If you compared rankings with non-experts and experts alike, you would find clear consistency, which researchers have demonstrated. Likewise, if I put you in some skates and told you to compete in the Olympics, in no way would you be on top of some “mount stupid,” thinking you had a shot at gold. You would rightly evaluate yourself as “not good,” along with anyone else watching.

As with any comfortable lie, Mount Stupid has some truth to it: Beginner’s luck is a thing. Also, the chart aligns with what it subjectively feels like to learn new things: quick progress when starting something new and then a slowdown in the intermediate phases. That’s a real thing, but it has little to do with delusion.

I don’t want to be naive: Overconfidence does happen. I’m reminded of that talentless break dancer from a couple of years ago in the Olympic games. How are we supposed to explain that?

Good Lord, her name was Raygun. The cultural reaction to that event proves that we lack some important digestive enzyme to deal with delusion. We can’t say she’s immoral (antiquated, intuitive), so we’re forced to come up with a more “scientific” explanation of how someone who plainly can’t breakdance would somehow bend reality with her self-deceit profoundly enough to win the rare opportunity to humiliate herself on the world’s stage.

There of course was the predictable reaction of the compassion-above-truth crowd: who’s to say what’s “good” anyway? Nothing matters and so please let’s all be nice. Those voices were quickly shouted down by the commonsense crowd who said that her lack of talent is objective and she should be ashamed for taking the spot of someone who actually worked hard. Once that dust settles, it’s time for a viral video essay from Vox or something explaining how “cognitive biases” “actually” work and how if you’re not always on high alert, you may fall victim to delusions, too. I don’t know if that video was produced in this particular case, but I wouldn’t be surprised.

The commonsense style reaction has gotten louder and more explosively reactive in recent years (the New Right), but they ultimately don’t win the intelligentsia, so they don’t get the last word. The fashionable cultural sensibility, on the whole, was pioneered last century by Danny Kahneman with his work on cognitive biases, which culminated in his massively popular book Thinking, Fast and Slow.

If you haven’t read it, you probably have absorbed it through unconscious osmosis: System 1 is fast, intuitive and prone to error, System 2 is slow, painful, and takes effort. The book goes through all the common and likely errors and delusions of cognition produced by System 1 (intuition) and how you probably can’t even correct for it if you tried. It paints human beings as hopelessly irrational (how one of them managed to write this very rational book is left a mystery). I won’t go into the examples he shows, but suffice it to say they are very convincing. It was a devastating blow to my intuition in my 20s. You come away from the thousands of video essays inspired by it with just a little less vitality in you, less ability to trust yourself.

You are invited to replace your instincts with knowledge: a little memorized chart of common cognitive biases to prevent you from being a normal person and instead be Less Wrong. You’re just a little more prepared to argue with your Fox News-watching uncle, and a little less in love with humanity. Maybe this would be a sacrifice worth making if it produced highly rational and effective people, but it doesn’t. Hordes of young rationalists flock to Silicon Valley with the idea that they will make themselves Less Wrong until they are hot, cool, and rich. I’ve seen them try. It doesn’t work.

Danny Kahneman himself observed this in his own students. No matter what he told them, they were still prone to biases. Probably everybody thinks they are the exception to the rule. Or maybe people look at that Dunning–Kruger chart and believe they can grind their way to the far right tail of that curve. But, remember, that chart is made up. Even according Danny, you actually can’t do much to change your System 1 thinking with System 2.

If it’s so hopeless, Danny, then why are we deconstructing our intuition in the first place? The vast majority of the time it’s right. He knows it. He spent his entire career finding edge cases where it fails. Fair enough. But maybe we should think of those as analogous to optical illusions: just because you can construe an image that momentarily confounds your automatic optical reasoning, doesn’t mean you should swear off trusting your eyes.

Researchers too often come to the conclusion that we should distrust our intuition. Perhaps that’s because they think real life is like their lab. In a lab, a subject is given a pre-determined task within tight parameters that has correct outcomes as determined by an omniscient third party, who is observing and resisting intervention. Overconfidence “bias,” for example, assumes this omniscient third agent capable, in real time, of assessing what is objectively true. But this isn’t how things unfold in reality.

“Overconfidence” in a moral person is just a statistical artifact of the necessity to reach beyond his grasp in order to fail iteratively toward developing a successful and embodied understanding of a given project. In real life, there is no “correct” vantage from nowhere. Action always must be approximated by more or less limited subjectivity. Reality ultimately requires leaps of “faith” to even interact with enough to produce useful “data” to be measurable in hindsight.

We have been deeply conditioned to distrust what I’m about to say, so bear with me: If our cognition were built on a seriously systematically “irrational” bias, given the state of the cosmos being filled with infinite unknowns contained in unknown unknowns, it would be phased out by natural selection. It isn’t, so we should assume a pragmatic function (even if it is beyond our ability to describe with linear logic), and work backwards to try to explain it from there. Instead, moderns assume irrationality and work to “debunk” from the vantage of how they assume things would be best arrayed if they were the clockwork god of Descartes. Then they sell a million books teaching the masses to all be better than humans by being like a machine, which isn’t possible or even helpful. The cosmos isn’t set up like a laboratory or a computer. If you hoped to control things, you might hope it were, but it isn’t.

This doesn’t mean we can’t still notice that some people are prone to make errors in their perceptions. For example, imagine four candies spread out over a large area next to five candies bunched close together. If you ask a child which one is more candy, he is likely to select the four candies spread further apart. Basically, he is taking a low-resolution heuristic that says something like “more space = more candy” and misapplying that. As a wiser adult, it’s easy to see his cognitive illusion and help him amend it. You could call it the More Volume Does Not Necessarily Equal More Mass fallacy, but at that point what are we doing?

At various levels, the error of improperly mapped heuristics happens to me and you probably every day. More wise people may be able to help us “see” our mistakes: the adult is to the child what the sage is to the adult. None of this implies there is anything fundamentally wrong with the way we perceive. It only implies that perception always takes place at different levels of resolution. Higher levels are less precise in detail, but more accurately capture the gestalt, and lower levels reveal illusions but sometimes mistake a forest for trees.

My wife has been acting since she was four. She has done the hard work of looking at the art of human expressiveness under scrutiny that I can’t imagine. Thus, she can literally see things in the details of movie performances that are invisible to me. When she points them out, I squint, go “woah” and then suddenly a pattern is revealed to me that I now see everywhere in other movies.

Master painters have trained themselves to see three hundred shades of blue where I see three. It’s not that my low-resolution impression of blue is wrong. It’s actually necessary for my cognition because I can’t process every quanta in infinite detail. I haven’t made it an embodied practice to know the fractal unfoldings of the relationships of color in the way that I have in, for example, language. We all have the ability to move up and down levels of resolution, but not infinitely and not instantly. There is no View from Nowhere that can account for all levels simultaneously. Human consciousness is a constant negotiation of these levels. Being good at that is what we call wisdom: the ability to know which level to be at when.

The Kahneman worldview undermines our ability to move up and down levels of perception by positing a universally true machine-like perspective we should all aspire to take. Unfortunately for that worldview but fortunately for us normal people, there is no context-free perspective. To the extent that you assume there is, you cripple your ability to negotiate frames in real time.

Think about the fact, for a cultural example, that we still have hedge fund managers working today, despite the fact that it has been statistically proven that they lose in the long run compared to just plopping in a general fund like the S&P 500. According to the Kahneman thinkers, we should be able to demonstrate how the entire industry is built on a cognitive bias and millions of rich people would stop wasting their money.

Except that doesn’t happen. Hedge funds are thriving. From the higher level of wealth over decades, this is irrational. But when you zoom in, subjective fear and uncertainty comes into play, which the hedge funds are specifically designed to assuage in the near-term. It doesn’t matter if you might become technically richer over a thirty-year period if the way to do it is to stick a large sum of money in an index fund. The people who depend on that money would not think kindly of that. Maybe they are wrong like the children with the candy, but you’d need to be an incredibly wise and trustworthy person to shift a cultural bias away from short-term certainty and toward long-term trust. We used to call those people prophets.

The “cognitive bias” outlook is nearly void of wisdom. It fails to see that all choices are deeply contextual, at various levels of depth, and intersubjectively moral in nature. Sticking a billion dollars in an index fund affects many interested parties, all operating at various levels of resolution. Real wisdom is realizing that these externalities are just as much a cost to factor in as dollars are. Stop aspiring to calculate all that and grow your wisdom. Grow a chest as well as a head.

Raygun is not an innocent victim of her cognitive biases. She weighed the contextual web of her options and realized that she could take advantage of a connection and her sport’s partial reliance on taste rather than the hard numbers of, say, a 100-meter sprint. That was an immoral choice, not a “biased” one. We know because it added up to a performance that we clock as icky.

And why can we judge it as such? Because we actually have a highly attuned intuition for morality. It is extremely reliable and useful. The crowd perceived her life choices to be opportunistic and self-aggrandizing. She may not have fully conscious of her moral failure, but hopefully her humiliation was a wake-up call.

So, no, you are not likely to fall for a similar delusion. You can’t protect yourself from it with materialistic vigilance anyway. Fundamentally, these are moral choices. If you lose faith in that and instead try to “game” the system by doing things like memorizing a list of cognitive biases, you will become an evil weirdo, and anyone with a functioning intuition will clock it instantly.

Love this. I have also come to distrust people who try to "educate" their way out of a need for common sense. Or who try to encourage others to do so. The world was built on common sense, and it worked quite well. And ever since everyone became educated and started arguing over the definitions of words, there are many things that have only gotten worse.

I had to reread that "bear with me" sentence 4 times, but I think I finally understand it. If our thinking was hideously and ineffectively irrational, we would have evolved out of it by now. Because it would be ineffective, ill adaptive. So, by definition, it isn't. In other words, we have intuition and common sense because they are useful across time. Because we must. The proof is that we're alive, in a world filled with more information than we can possibly contemplate.

Very clever, I like it.